Product designer

Clint Health

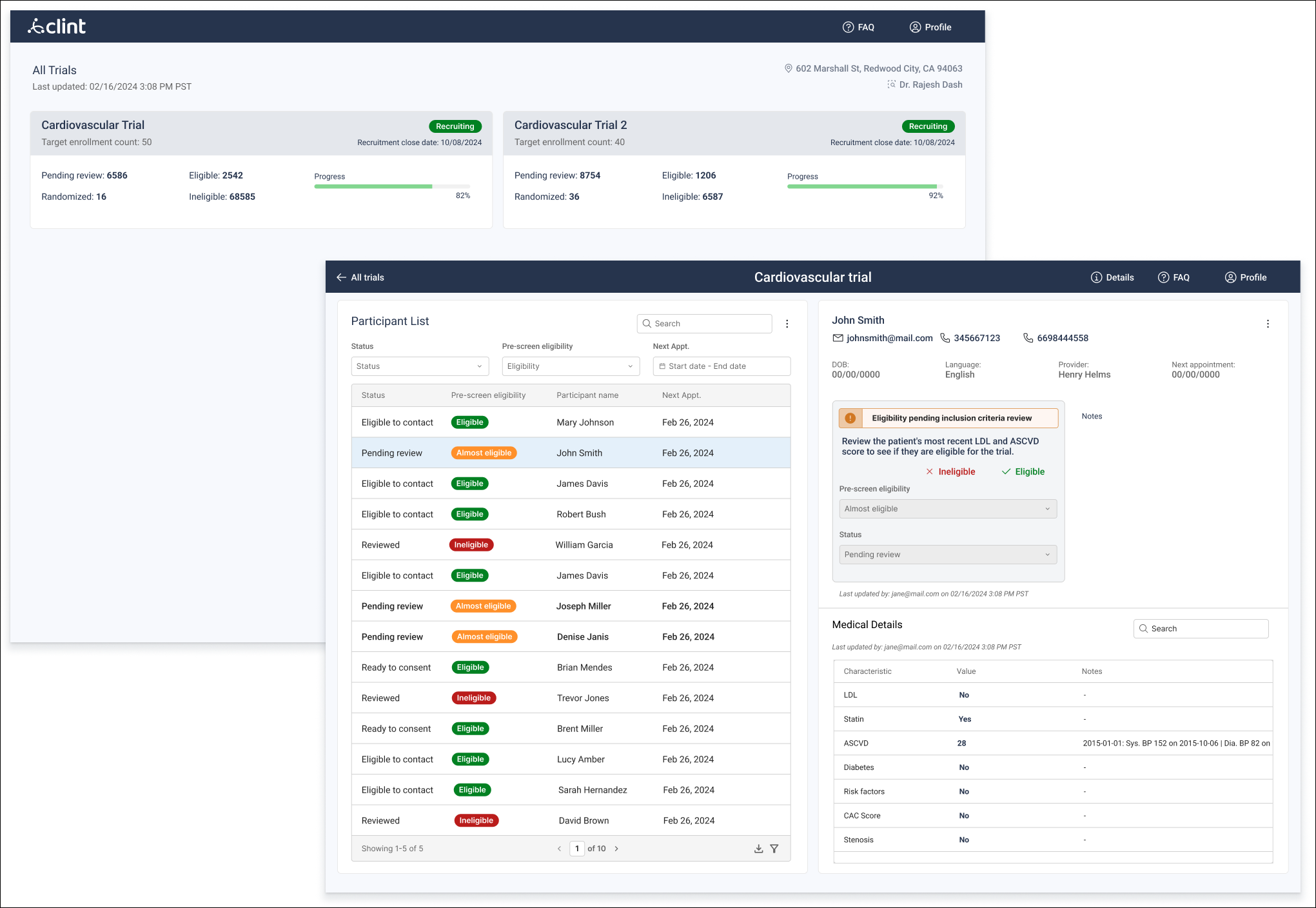

Time to reach recruitment goals was reduced by up to 30% reducing the total time from 18 to 4 months

The process reveled better quality candidates and up to 20% less screen failure rates in clinical trials

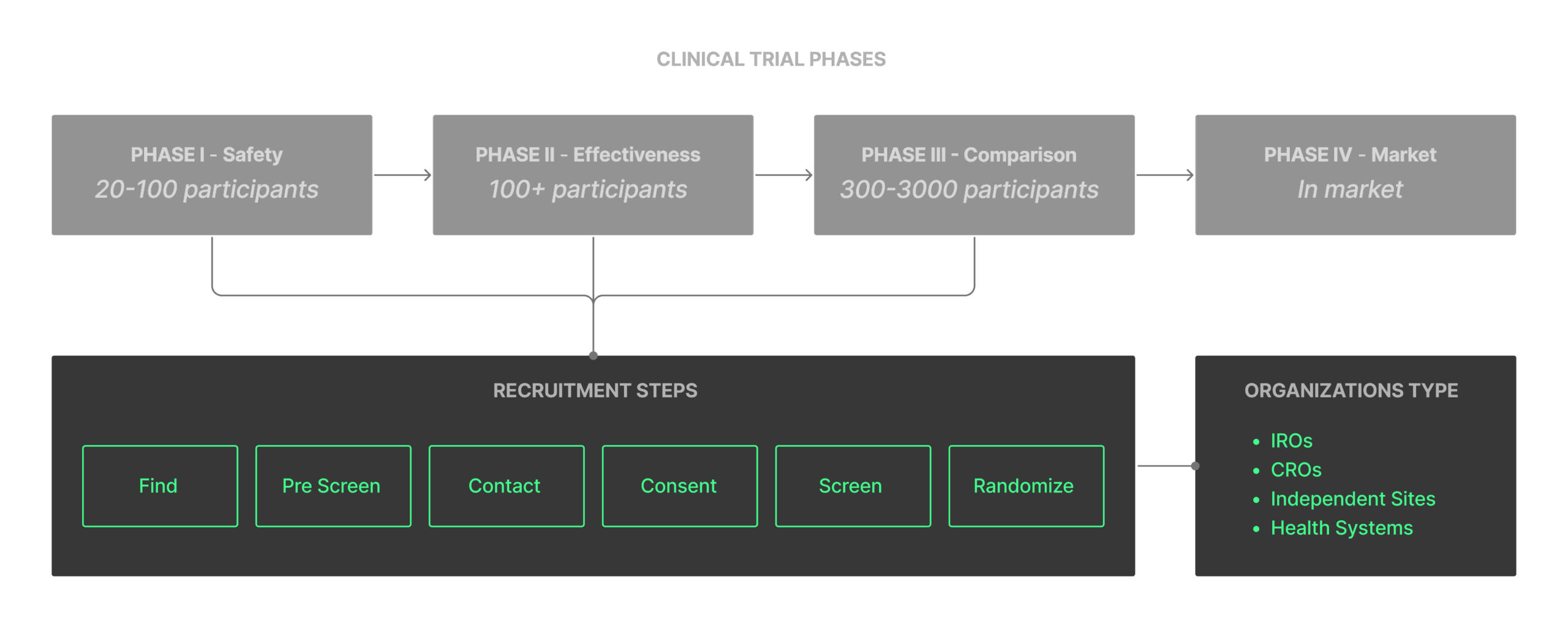

By combining my research with insights from a colleague experienced in clinical trial recruitment, I developed a deep understanding of the landscape in which the product would be used.

It includes, relevant HIPPAA regulations, clinical trial phases, different types of organizations within the recruitment space and their most common work hierarchy.

User interviews revealed that their workflow was highly manual, involving complex navigation of EHR files for each patient and no standardized process for storing information, which was often kept in Excel sheets or even physical binders.

Guided by business direction, we narrowed our focus to Integrated Research Organizations (IROs), which operate across multiple sites, trials and sponsors.

This focus helped us identify key requirements to support complex, multi-site trial management, such as:

Support multiple sites running different trials.

Provide trial-level metrics without exposing PHI.

Limit PHI visibility by role per HIPAA regulations.

Restrict PHI access to each site and clearly separate data in the UI for compliance.

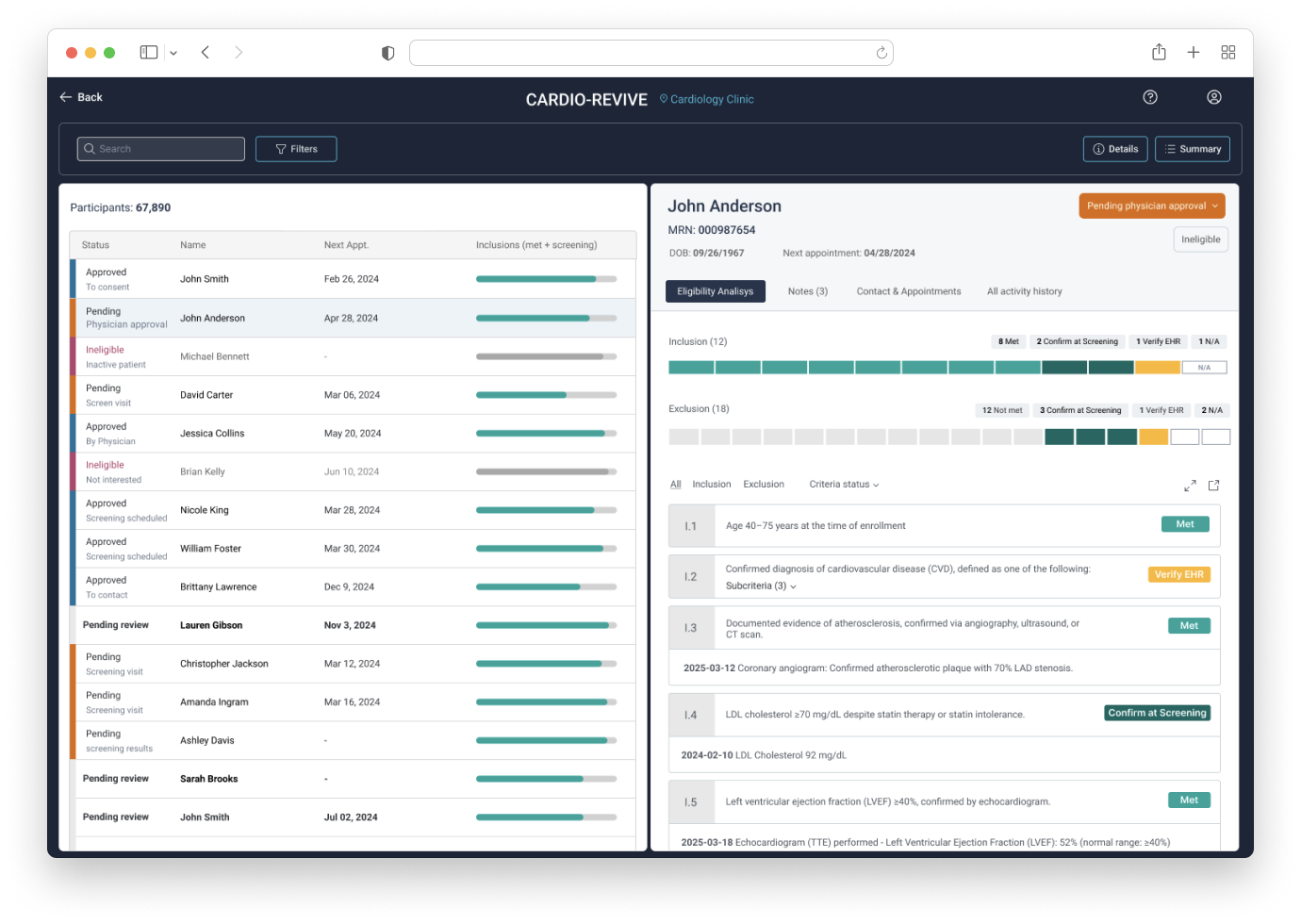

The series of steps involved in determining participant’s eligibility

This is where the company’s data analysis capabilities had the greatest impact in saving users time by matching participants’ EHR data with specific trial protocols to show the most likely eligible candidates.

Where users keep track of the participant’s status while going through the eligibility determination.

Found the most variation here between sites, so we generalized each step to be usable across workflows, improving retention by reducing the need to manage work outside the platform.

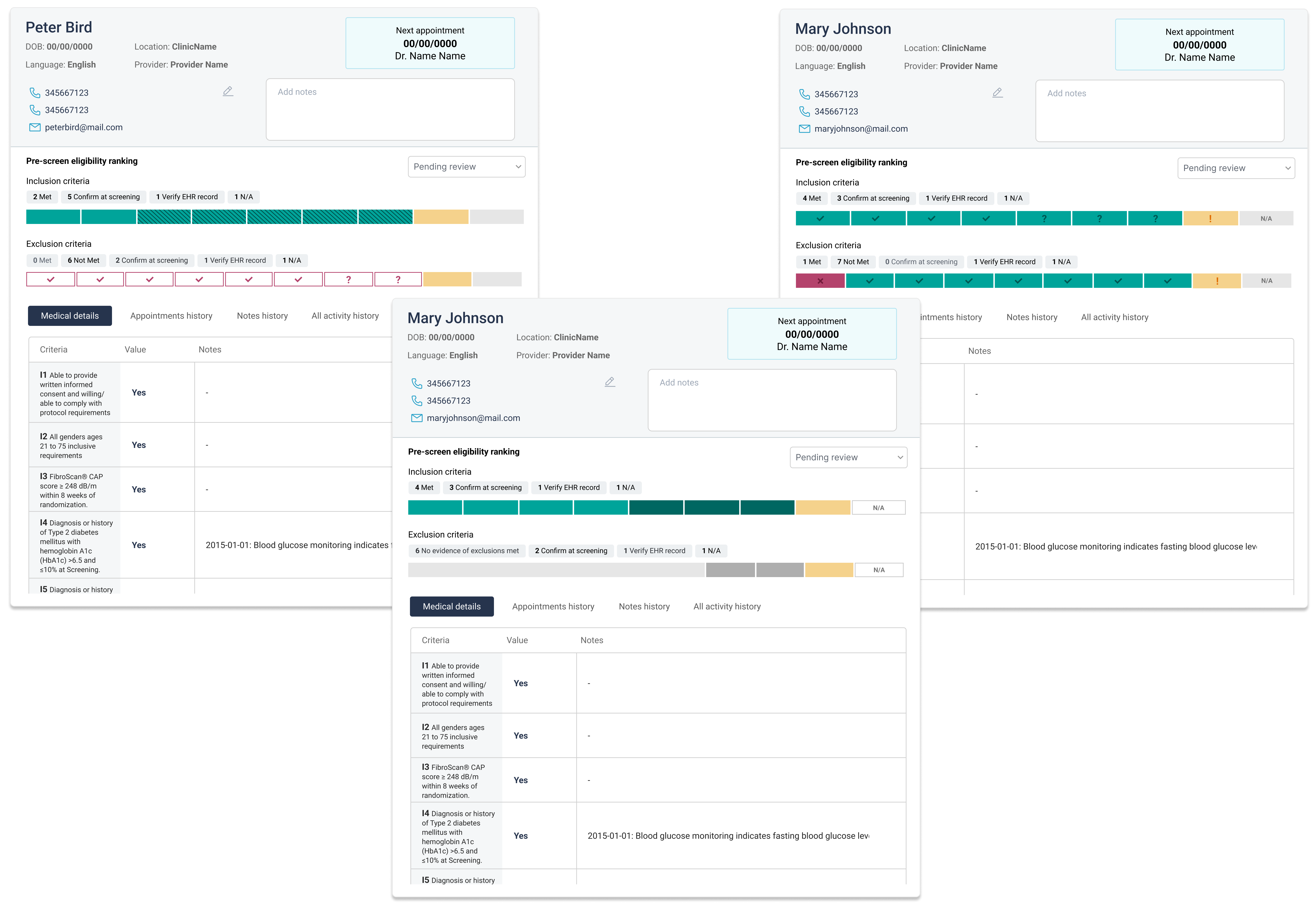

Understanding and exploring the possibilities of the data was key to the product’s success. We worked closely with the data science and engineering teams, translating UX research findings into actionable hypotheses.

Participant recruitment can be nuanced and often relies on clinical judgment rather than strict rules, we had to account for these gray areas when designing how data would surface in the workflow and how the back-end would support flexible, context-aware decision-making.

The UX flows revealed the need to have a large amount of information quickly available for users to analyze and quickly move between participants.

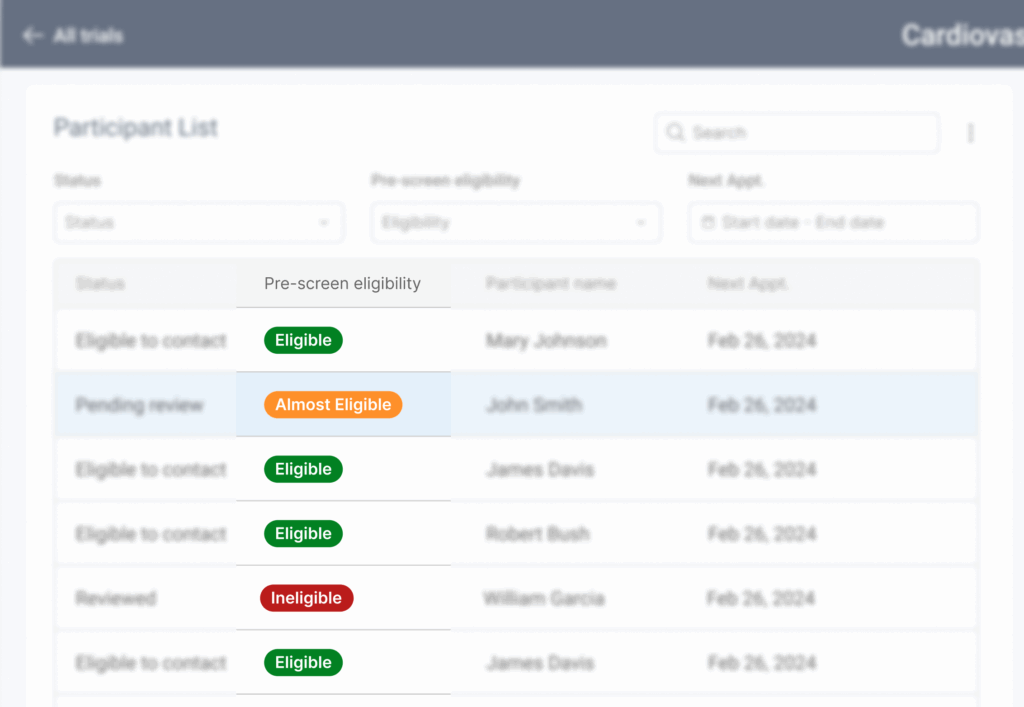

This led to a side-by-side layout: one side for the participant list providing an overview of relevant information for prioritization of most likely eligible participants and the other side showing detailed information about the selected participant.

I moved quickly to prototyping and performing a few usability tests in order to validate the overall structure and ensure that flows, language, and labels were clear and actionable.

The test resulted in the need to add more helper text throughout the interface considering the lack of standard in recruitment flows there wouldn’t be a single label that would satisfy all users.

One major insight from the MVP involved the eligibility determination feature. The version users tested applied a TIERED ranking to pre-screen and prioritize candidates before recruitment teams began their own analysis.

The product team worked closely to resolve this issue. We had to consider the subjectivity of eligibility assessments while still saving users time and helping them focus on likely matches.

We replaced the tiered system with a matching scale to show how well each participant fit the protocol criteria. This scale appeared in the participant table and the individual details view. In the latter, the scale was broken down to show which specific criteria were met or not, along with the medical evidence supporting it.

Users reported that the first version proposed for the statuses were often missed or too specific, so all the we grouped all statuses into three main tiers: approved, pending, and ineligible. Each tier included subcategories, plus a custom option.

This made it easier for users to navigate and direct their work, while overall UI refinements improved clarity and usability.

The product was adopted across multiple organizations and sites. Users reported a significant workflow improvement, especially in being able to find key information in one place instead of digging through EHRs and notes.

They also valued the ability to track participant status individually and as a team, using activity logs to see updates and allowing managers to report progress more easily to sponsors.

Reported improvement metrics:

Time to reach recruitment goals was reduced by up to 30% reducing the total time from 18 to 4 months

The process showed better quality candidates and up to 20% less screen failure rates in clinical trials

This project was fascinating. I built the product from scratch as the sole designer while working deeply with data science and engineering.

It was also challenging. Design decisions had to happen alongside data model development and backend engineering.

I relied heavily on design fundamentals, known usability patterns, and a clear UI hierarchy to guide the user through a highly technical and fast-paced workflow.